I have written about weaknesses in the Windows 10 TCP/IP CUBIC congestion control mechanism in both this blog (Slow performance of IKEv2 built-in client VPN under Windows) and in a number of threads in the Microsoft Q&A site (that typically mention slow upload speed only under Windows (good speed under other OSes)); I also mentioned some links that suggested improvements were under development.

The improvements did not surface in any mainstream Windows

10 version of which I am aware; however Windows 11 does seem to incorporate

substantial changes (at least an improved RFC 8985 (The RACK-TLP Loss Detection

Algorithm for TCP) implementation with per-segment tracking), albeit with a

least one minor bug which still limits performance (the bug is still present in

Windows 11 build 22000.376).

The “bug” that I am referring to is a “sanity check” in the

routine TcpReceiveSack. The problematic check is that if the SACK SLE (SACK

Left Edge) is less than the acknowledgement (i.e. appears to be a D-SACK) then

the SACK SRE (SACK Right Edge) must also be less than the acknowledgement; this

appears to be coded incorrectly and means that genuine D-SACKs are not

recognized as such. This failure to recognize D-SACKs means that tuning of

things such as the “reorder window” does not take place.

The initial size of the “reorder window” (RACK.reo_wnd) is RACK.min_RTT/4

and it can grow to a maximum size of SRTT (Smoothed Round Trip Time). For many

configurations and degrees of packet reordering, the default size eliminates

many unnecessary retransmisions. However, in my test configuration (TCP inside

an IKEv2 tunnel between two systems on the same LAN with substantial reordering

introduced by the network adapter driver and a very low min_RTT (less than one

millisecond)), the default reorder window size is completely inadequate.

A one-byte patch to tcpip.sys can (more or less) “correct”

this problem (the patch does not handle sequence number wrap-around), enabling

performance tests with and without the patch to be performed. This patching is

risky (it will eventually result in a bug check when the change is detected by PatchGuard), but I judged the value of the performance

test results to be worth the inconvenience.

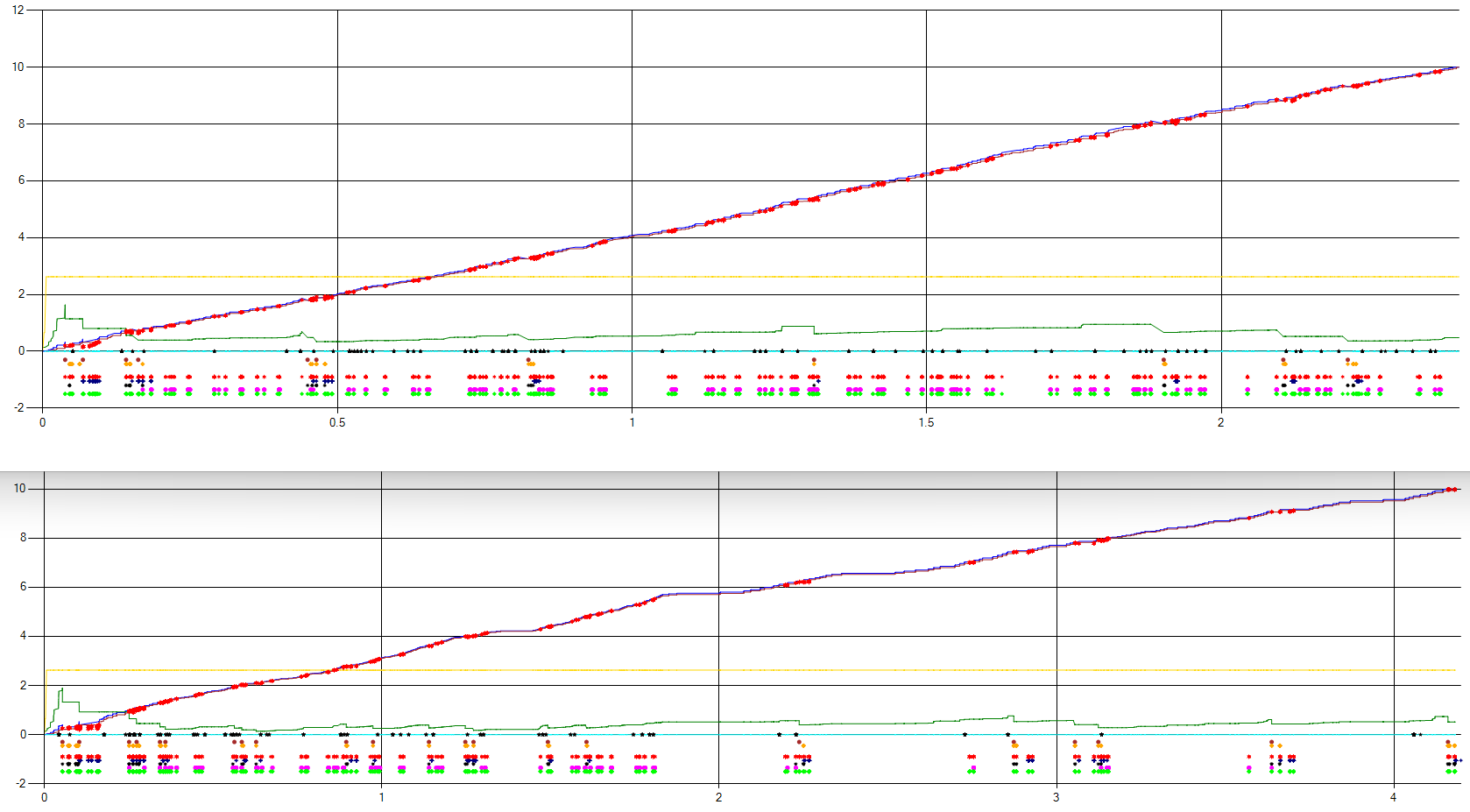

What follows are traces of two 10 MB “discards” (TCP port

9), with and without the patch:

With the patch (above), the 10 MB discard takes about 2.4

seconds and, without the patch (below), it takes about 4.2 seconds.

The green line (the congestion window size) is reduced more

without the patch, although there is less “reordering” than in the patched

test. The yellow line is the send window size (auto-tuned by the server) and is

indeed approximately the minimum window size needed to achieve the theoretical

maximum throughput.

The dots on and below the x-axis are points at which

“interesting” events occur; from the x-axis downwards:

·

Packet reordering

· TcpEarlyRetransmit (Forward ACK (FACK), Recent

ACK (RACK))

·

TcpLossRecoverySend Fast Retransmit

·

TcpLossRecoverySend SACK Retransmit

·

TcpDataTransferRetransmit

·

TcpTcbExpireTimer

·

D-SACK

·

TcpSendTrackerDetectLoss

·

TcpSendTrackerSackReorderingDetected

·

TcpSendTrackerUpdateReoWnd

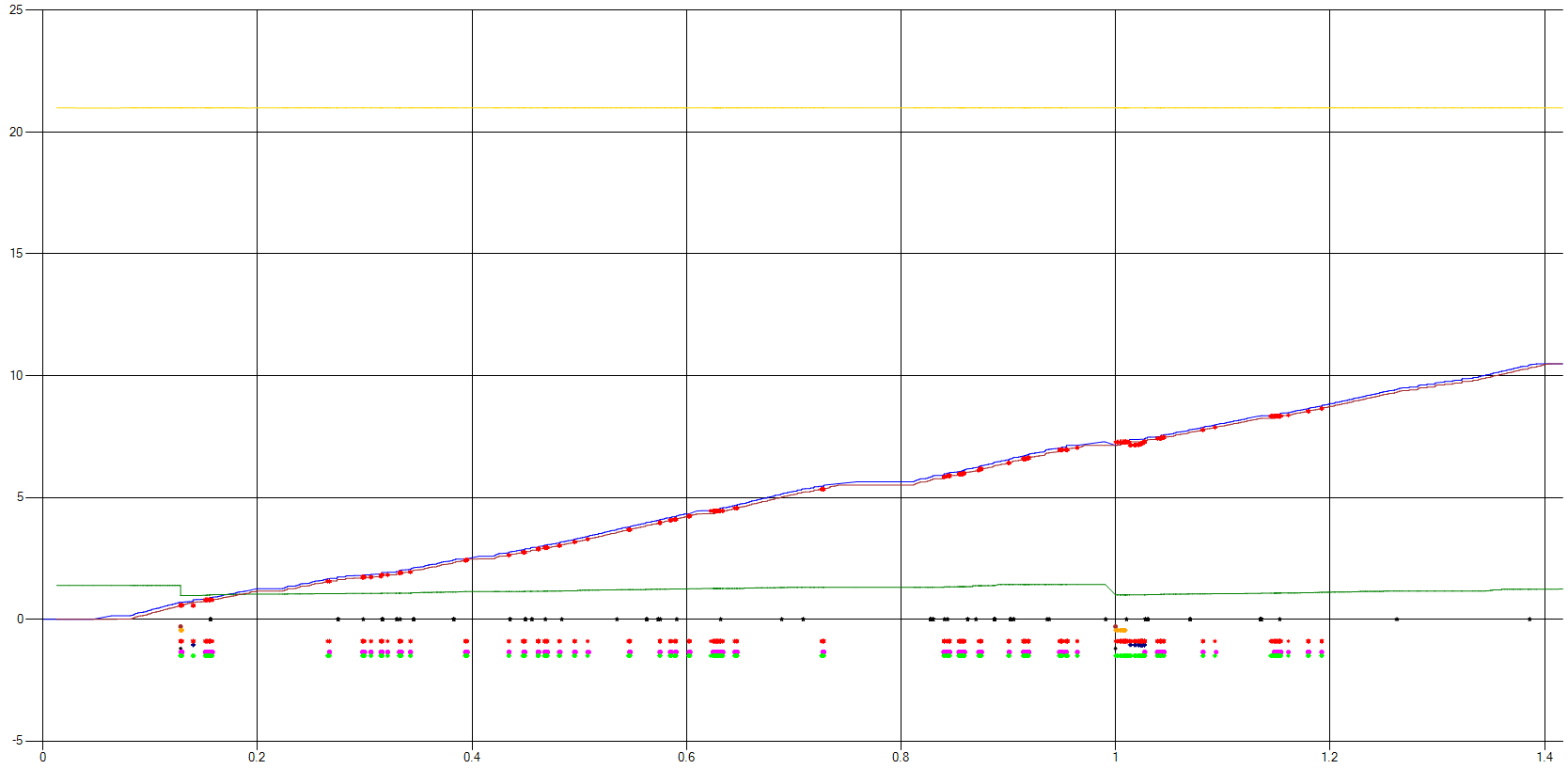

A graph of the Windows 11 trace data gathered by someone

with a “real world” setup (minimum RTT of about 9 milliseconds and using SMB

file copy to generate the traffic) and no patching looks like this:

In this case, the initial reordering window was large enough

to allow almost all reordering to be detected (and therefore to avoid spurious

retransmissions).

The trace where D-SACK recognition is working shows that

congestion window reduction following a retransmission is not “undone” in the

case that a D-SACK shows the retransmission to have been spurious; this may

mean that Windows 11 will not perform as well as some other operating systems

when sending TCP data over a path where some packet reordering is present.

There are two Microsoft-Windows-TCPIP events that indicated

whether D-SACKs are being recognized. In the TcpReceiveSack event, there is a

DSackCount member that accurately reflects the number of D-SACKs (as observed

in the raw packet data) when D-SACK recognition is working. In the

TcpSendTrackerUpdateReoWnd event, there are several members that reflect the

state of the RACK algorithm: Multiplier (RACK.reo_wnd_mult), Persist (RACK.reo_wnd_persist),

Reownd (RACK.reo_wnd), ReorderingSeen (RACK.reordering_seen),

DSackSeenOnLastAck, DSackRound/DSackRoundValid (RACK.dsack_round); when D-SACK

recognition is working and D-SACKs are present then this event contains

meaningful data.

October 2022 Update: Windows 11 22H2 corrects the D-SACK recognition problem.

What tool do you use to generate these graphs?

ReplyDeleteThe tool is a simple home-made program, using the .NET System.Windows.Forms.DataVisualization.Charting classes for the graphs.

DeleteExcellent post again Gary.

ReplyDeleteI wonder if it would be a viable option to just replace parts of the Windows 10 (or 11) TCPIP stack for performance a boost.

Maybe a legit driver setup with an alternative network device.

Hopefully wouldn't take some hacky setup that relies on disabling PatchGuard etc., but maybe an okay tradeoff still.

Very interesting, thanks. One question: How did you get the data, i.e., the internal TCP states like cwnd and events shown in your graphs?

ReplyDeleteHello Joerg/Jörg, the Microsoft-Windows-TCPIP Event Tracing for Windows (ETW) provider makes this information available. There are lots of tools (built-in and third party) that allow ETW to be controlled; logman, pktmon, "netsh trace", several PowerShell cmdlets and perhaps more are the built-in mechanisms. Gary

Delete